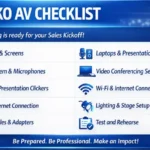

SKO AV Checklist: The Guide for Hybrid & In-Person Sales Kickoffs

03/01/2026Enterprise Virtual Production & National Live Streaming Case Study

04/21/2026Delivering executive communication at enterprise scale requires more than standard live streaming infrastructure.

This case study examines how a Fortune 500 SaaS organization executed a leadership broadcast across 18 U.S. states, achieving 99.99% uptime, zero packet loss, and consistent audience experience across both in-room and remote environments.

- Client: Fortune 500 SaaS Enterprise (Annual Leadership Town Hall)

- Service: Virtual Event Production | Enterprise Live Streaming

- Scale: 1,200 In-Person Attendees | (18 U.S. States)

- Technical Goal: 99.99% Broadcast Uptime | Sub-50ms Failover | SOC2-Compliant Transmission

Nationwide Executive Broadcast & Live Streaming Gallery

1. The Challenge: High-Performance Executive Broadcasting at National Scale

Enterprise live streaming is unforgiving. When this Fortune 500 SaaS company prepared for its annual leadership town hall, the requirement was absolute:

- No observable signal interruptions during the live broadcast

- No perceptible audio degradation across in-room and remote audiences

- Identical experience for in-room and remote audiences

The event involved 1,200 in-person attendees and simultaneous broadcast distribution across 18 U.S. states.

A single technical failure during the CEO keynote would not be perceived as a minor disruption it would directly impact leadership credibility across the organization.

Environmental Constraints

The venue introduced additional complexity:

- Deep ballroom layout with sightline limitations

- Large-scale seating distribution

- Need for broadcast-quality delivery across remote offices

This meant an employee watching remotely in Ohio had to experience the same clarity as someone seated in the front row in San Diego.

2. The Technical Solution: Broadcast-Grade Control Architecture

Eleven Eleven AV engineered a custom Master Control Centre a broadcast-grade command environment managing the full multi-input signal chain from NDI camera feeds through to encrypted RTMP delivery at each remote hub.

Every technical decision served one non-negotiable output: flawless executive messaging at every seat, in every state, for the full four-hour broadcast window.

Multi-Camera Surgical Switching | 12G-SDI Infrastructure

Professional broadcast-grade video switchers managed real-time cuts between high-definition ISO camera feeds across all stage positions, delivering a TV-quality, dynamically directed program that sustained audience attention through the full session.

Confidence monitors at all FOH and production control positions ensured zero blind spots in the signal chain. Camera-to-switcher transmission ran via 12G-SDI cabling, eliminating compression artifacts in the master program feed.

M-Class Audio Precision Engineering | Yamaha DM7 Digital Console

Our FOH engineers operated a Yamaha DM7 Digital Audio Console performing real-time M-Class extraction, frequency balancing, and dynamic acoustic treatment to counteract the reverb signature of the 40,000 sq ft ballroom.

The result was broadcast-clean dialogue for both the in-room mix and the remote stream, regardless of venue acoustic challenges. Remote audiences across key regions including the East Coast, Midwest, and Southern states experienced the same audio and visual fidelity as in-room attendees.

High-Fidelity LED Matrix | Sub-Millimetre Pixel Mapping

To eliminate visual dead zones across the deep ballroom layout, we deployed a synchronized LED matrix system with sub-millimetre pixel mapping precision.

Every attendee from front row to rear overflow seating received furniture-grade visual clarity on all slide content, speaker transitions, and data-intensive executive presentations.

The LED matrix was synchronized frame-accurately with the program feed to prevent any visual lag between stage content and display surfaces.

SDI-over-IP Signal Distribution, NDI Workflow Integration

All internal signal routing leveraged an SDI-over-IP infrastructure with NDI workflow integration enabling lossless, low-latency signal transport between camera positions, the Master Control Centre (MCC), and the encoding stack.

This eliminated the signal degradation typically introduced by long copper runs in large venue environments and provided full flexibility for real-time signal rerouting without physical cable changes.

3. Risk Mitigation: The Triple-Layer Surgical Failover Protocol

In enterprise live streaming production, redundancy is not a feature it is the foundation of the entire production architecture. Our engineers implemented a three-tier failover system that made broadcast interruption technically improbable, not merely unlikely.

Layer 1: Bonded Internet Uplink

Primary broadcast transmission ran via dedicated fiber. An automated bonded cellular failover system aggregating 4 independent carrier signals simultaneously operated in parallel throughout the entire event.

In the event of any fiber degradation, the transition to cellular bonding occurs in under 50ms, below the perceptible threshold for any live stream viewer at any remote location.

Layer 2: Redundant RTMP Handshake

Parallel hardware encoders operated simultaneously throughout the full broadcast window, each maintaining independent RTMP handshakes to the content delivery network.

If the primary encoding unit experienced any fault condition, the secondary unit assumed the handshake with 0ms of packet loss completely invisible to every viewer across 18 U.S. states Nationwide.

Layer 3: Power Security & Encrypted Transmission

All control positions including the MCC, FOH audio position, and camera control units were protected by independent UPS power systems, eliminating any vulnerability to venue power fluctuations.

All transmission tunnels utilized 256-bit AES encryption, ensuring full SOC2 compliance and protecting proprietary leadership communications from interception or signal degradation throughout the broadcast.

18-State Deployment Matrix: Nationwide Operational Logistics

Nationwide enterprise live streaming requires coordinated regional infrastructure, pre-validated signal paths, and on-call technical support at each remote node not just a strong central hub.

Here is how our deployment was structured across the continental United States.

|

Region |

Primary Hubs Served |

Technical Infrastructure Implemented |

|

West Coast |

California, Washington, Arizona |

On-site 4K Camera Ops, Yamaha DM7 Audio Mixing, & Sub-Millimetre LED Mapping |

|

The South |

Texas, Florida, Georgia, N. Carolina |

Bonded Cellular Backup, Secure RTMP Handshakes, & Live Q&A Integration |

|

East Coast |

New York, Pennsylvania, New Jersey |

Remote Producer Hubs, Encrypted Global Streams, & Low-Latency Signal Delivery |

|

The Midwest |

Illinois, Ohio, Massachusetts |

Parallel Hardware Encoding & Synchronized Multi-Site Feed Alignment |

Performance Outcomes: Verified Results

All performance metrics were validated through post-event analytics and reviewed with internal operations and communications leadership within 48 hours.

99.99% Stream Integrity

Zero technical interruptions or signal degradation across the full four-hour live broadcast

97.4% Audience Retention Rate

Verified analytics confirmed remote viewers remained synchronized with the live program through to final sign-off

98% Positive Sentiment

Post-event survey: employees across all 18 remote states reported feeling highly connected to central leadership vision

0ms Packet Loss

With no observable packet loss, ensuring uninterrupted viewing across multiple states

Ready to elevate your next executive broadcast?